Testing is expected to be performed running on Windows (x86_64) and Linux (x86_64) and perhaps Mac OSX The following JVMs may be tested for acquiring dumps and for analyzing dumps, generally on the Windows platform. For windows, it’s in the same directory as eclipse.exe file, as shown in below image. For Mac OS X, it’s found inside the app. So if Eclipse app is in Downloads directory, eclipse.ini file location will be: You can reach this location by first right clicking on Eclipse app and click on “Show.

I have a HotSpot JVM heap dump that I would like to analyze. The VM ran with -Xmx31g, and the heap dump file is 48 GB large. I won't even try jhat, as it requires about five times the heap memory (that would be 240 GB in my case) and is awfully slow. Eclipse MAT crashes with an ArrayIndexOutOfBoundsException after analyzing the heap dump for several hours.

What other tools are available for that task? A suite of command line tools would be best, consisting of one program that transforms the heap dump into efficient data structures for analysis, combined with several other tools that work on the pre-structured data.

Normally, what I use is ParseHeapDump.sh included within and described, and I do that onto one our more beefed up servers (download and copy over the linux.zip distro, unzip there). The shell script needs less resources than parsing the heap from the GUI, plus you can run it on your beefy server with more resources (you can allocate more resources by adding something like -vmargs -Xmx40g -XX:-UseGCOverheadLimit to the end of the last line of the script. For instance, the last line of that file might look like this after modification./MemoryAnalyzer -consolelog -application org.eclipse.mat.api.parse '$@' -vmargs -Xmx40g -XX:-UseGCOverheadLimit Run it like./path/to/ParseHeapDump.sh./todayheapdump/jvm.hprof After that succeeds, it creates a number of 'index' files next to the.hprof file. After creating the indices, I try to generate reports from that and scp those reports to my local machines and try to see if I can find the culprit just by that (not just the reports, not the indices). Here's a tutorial on.

Example report:./ParseHeapDump.sh./todayheapdump/jvm.hprof org.eclipse.mat.api:suspects Other report options: org.eclipse.mat.api:overview and org.eclipse.mat.api:topcomponents If those reports are not enough and if I need some more digging (i.e. Let's say via oql), I scp the indices as well as hprof file to my local machine, and then open the heap dump (with the indices in the same directory as the heap dump) with my Eclipse MAT GUI. From there, it does not need too much memory to run. EDIT: I just liked to add two notes:. As far as I know, only the generation of the indices is the memory intensive part of Eclipse MAT.

After you have the indices, most of your processing from Eclipse MAT would not need that much memory. Doing this on a shell script means I can do it on a headless server (and I normally do it on a headless server as well, because they're normally the most powerful ones). And if you have a server that can generate a heap dump of that size, chances are, you have another server out there that can process that much of a heap dump as well. Opened a 2GB heap dump with Eclipse Memory Analyzer GUI using no more than 500MB memory. Index files were created on the fly on file opening (taken 30sec). Maybe they improved the tool.

It is more convinient than copy big files back and forth, if it really works this way. Small memory footprint even without any console utilities is a big plus for me. But to be honest, I didn't try it with really big dumps (50+ GB). Very interesting how much memory is required to open and analyze such big dumps with this tool.

– Aug 1 at 23:16. The accepted answer to this related question should provide a good start for you (uses live jmap histograms instead of heap dumps): Most other heap analysers (I use IBM ) require at least a percentage of RAM more than the heap if you're expecting a nice GUI tool. Other than that, many developers use alternative approaches, like live stack analysis to get an idea of what's going on. Although I must question why your heaps are so large? The effect on allocation and garbage collection must be massive. I'd bet a large percentage of what's in your heap should actually be stored in a database / a persistent cache etc etc.

In case of using MAT on MAC (OSX) you'll have file MemoryAnalyzer.ini file in MemoryAnalyzer.app/Contents/MacOS. It wasn't working for me. You can create a modified startup command/shell script based on content of this file and run it from that directory. In my case I wanted 20 GB heap:./MemoryAnalyzer -startup./././plugins/org.eclipse.equinox.launcher1.3.100.v201.jar -launcher.library./././plugins/org.eclipse.equinox.launcher.cocoa.macosx.x86641.1.300.v201 -vmargs -Xmx20g -XX:-UseGCOverheadLimit -Dorg.eclipse.swt.internal.carbon.smallFonts -XstartOnFirstThread Just run this command/script from Contents/MacOS directory via terminal, to start the GUI with more RAM available.

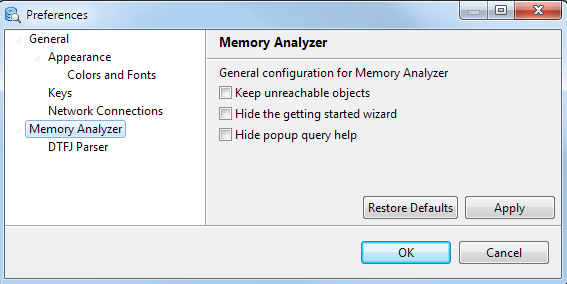

Copy -vm /Library/Java/JavaVirtualMachines/jdk1.8.073.jdk/Contents/Home/bin -vmargs You can configure it similarly for Windows or Linux operating systems. Just change the JDK bin directory path accordingly. Eclipse.ini Permgen Space If you are getting java.lang.OutOfMemoryError: PermGen space error, mostly when you are working on larger code base, doing maven update for large projects etc., then you should increase Permgen space. Below is the configuration to increase permgen space to 512 MB in eclipse.ini file.